Semantic Layer

A deep dive into the semantic layer, the critical architectural component that abstracts physical data complexity into governed, business-friendly logic for unified enterprise analytics and AI.

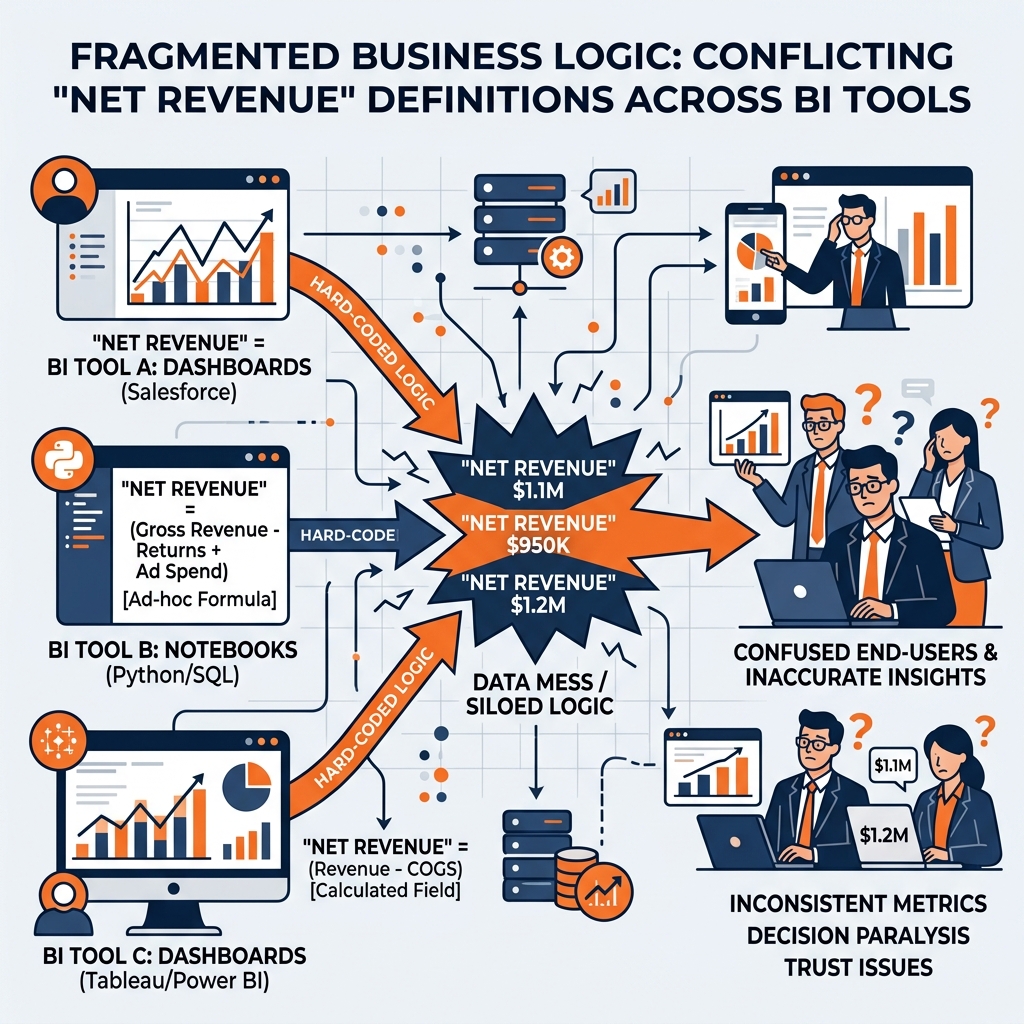

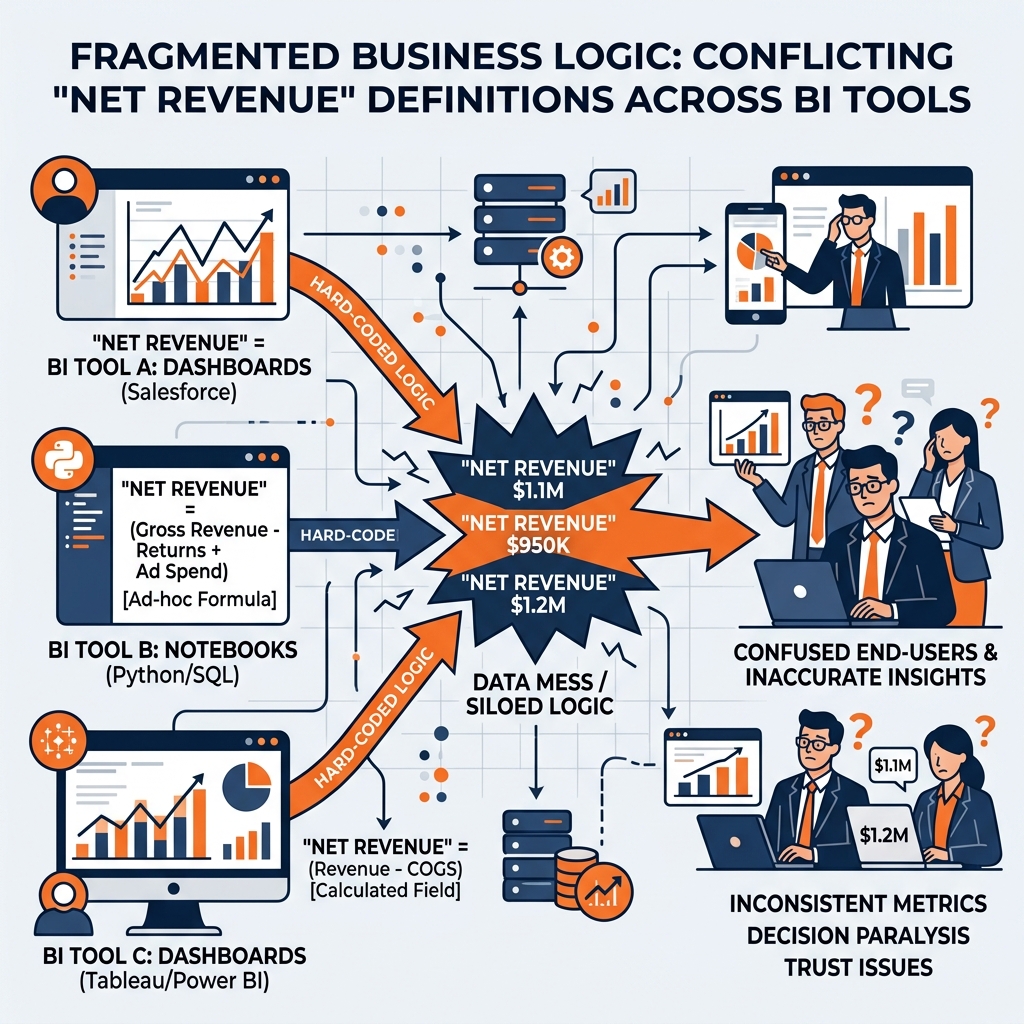

The Crisis of Fragmented Business Logic

To understand the fundamental necessity of a universal semantic layer, one must first examine the chronic dysfunction that plagued enterprise data architectures prior to its adoption. Historically, organizations attempted to deliver analytics by building direct, point-to-point connections between physical data storage (such as data warehouses or data lakes) and downstream consumption tools (such as Business Intelligence dashboards or data science notebooks).

In this legacy architecture, raw tables containing billions of rows of physical telemetry were exposed directly to the end-users. Because raw physical data is inherently devoid of business context, the responsibility of applying logic and defining metrics fell entirely on the individuals building the reports. If an analyst needed to build a dashboard displaying “Active Customers,” they had to manually write complex SQL joins to connect the raw users table with the subscriptions table, and then hard-code a WHERE clause to filter out canceled accounts.

This approach inevitably led to the crisis of fragmented business logic. In any large organization, multiple departments utilize different tools to interact with data. The marketing team might build their dashboards in Tableau, the finance team might rely on Power BI, and the data science team might extract data using Python scripts in Jupyter Notebooks. Because the business logic was not centralized, every single team was forced to recreate the definition of “Active Customers” independently within their respective, proprietary tools.

Predictably, human error and differing departmental interpretations resulted in wildly inconsistent definitions. The marketing team’s Tableau dashboard might define an active customer as anyone who logged in within the last 30 days, while the finance team’s Power BI report might define it as anyone with a paid invoice in the current fiscal quarter. When executive leadership attempted to reconcile these reports during a quarterly review, the numbers never matched. This phenomenon, known as the “multiple versions of the truth” anti-pattern, destroyed organizational trust in the data platform.

Furthermore, this fragmentation created an insurmountable technical debt burden for data engineering teams. If the underlying structure of the physical data warehouse changed, for instance, if an engineering team migrated from a legacy on-premises database to a cloud data lakehouse, the data engineering team was forced to manually track down and rewrite thousands of embedded SQL queries scattered across dozens of proprietary BI tools. The simple act of renaming a physical database column could trigger weeks of cascading dashboard failures across the enterprise. The industry desperately needed an architectural mechanism to decouple physical data infrastructure from the logical business definitions.

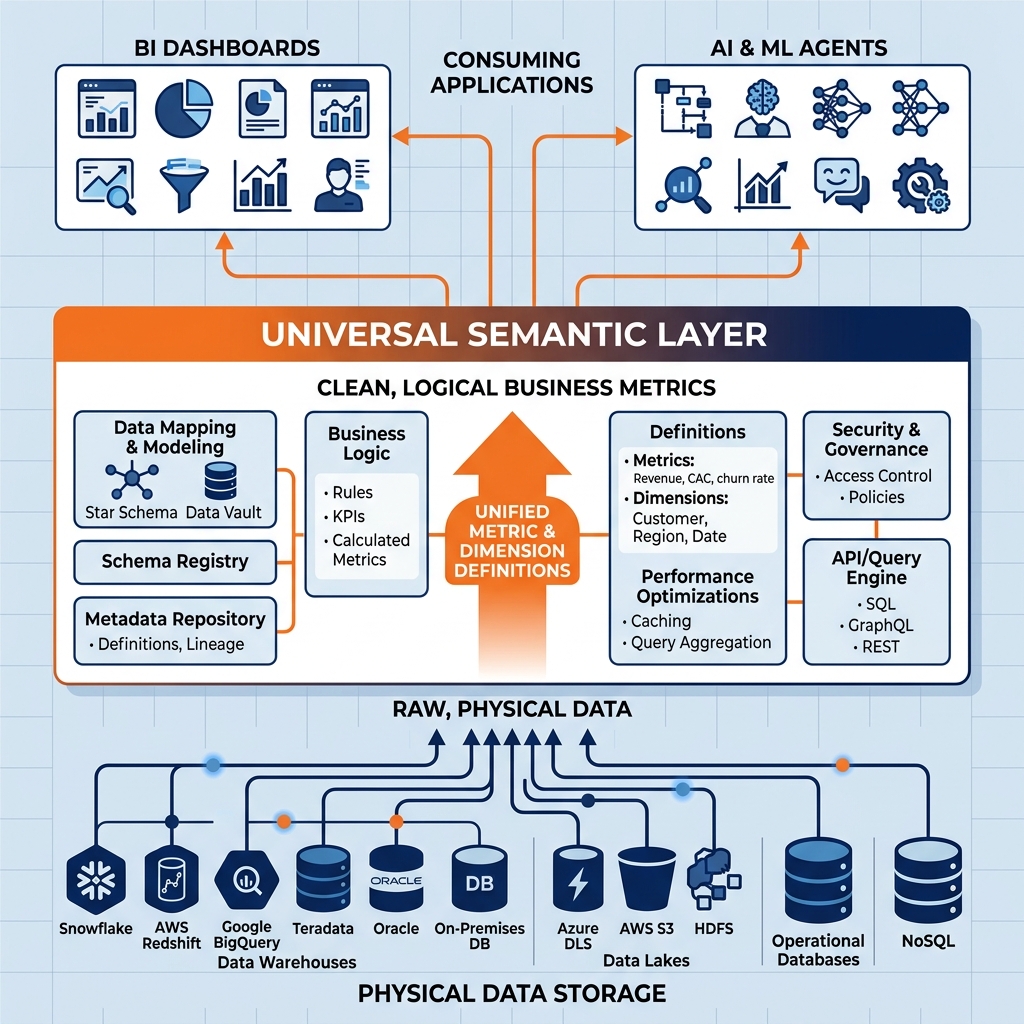

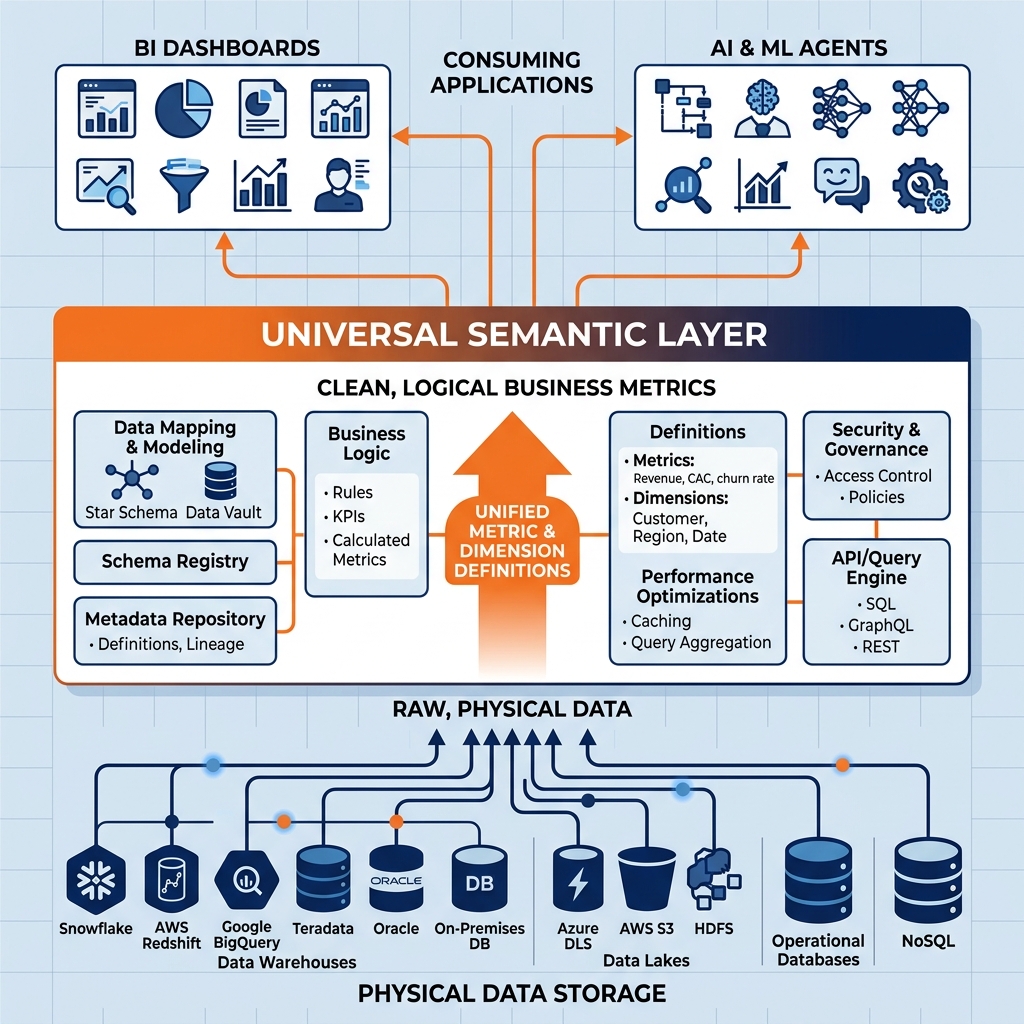

The semantic layer was conceptualized specifically to resolve the chaos of fragmented logic. At its core, a semantic layer is a centralized logical abstraction architecture that sits directly between the physical data storage infrastructure (the data warehouse, data lake, or lakehouse) and the downstream consumption applications (BI tools, AI agents, and custom applications).

Abstracting Physical Complexity

The primary function of the semantic layer is translation. It acts as an intelligent intermediary that translates the complex, highly technical reality of physical data storage into intuitive, business-friendly concepts that non-technical users can easily understand and query.

Physical data storage is designed for machine efficiency, not human comprehension. Tables are often heavily normalized across dozens of files, column names are optimized for minimal byte size (e.g., cust_id_fk_idx, txn_amt_raw), and critical data types are frequently stored as cryptic integer codes rather than descriptive strings. When a semantic layer is implemented, data engineers use it to build a map over this physical complexity. They create logical entities (often called virtual datasets or models) that assign clear, standardized names to these fields (e.g., Customer ID, Transaction Amount). They define the complex join relationships between disparate tables once, directly within the semantic layer, ensuring that downstream users never have to write a JOIN statement manually to connect sales data to geographic data.

Defining the Universal Metric

Beyond mere naming conventions, the semantic layer serves as the absolute, single source of truth for all organizational metrics and business logic. Instead of allowing individual analysts to define what constitutes “Net Revenue” in their local BI tools, the data engineering team codes the exact mathematical formula for “Net Revenue” directly into the semantic layer.

When a user in Tableau requests the “Net Revenue” for Q3, they simply drag and drop the logical Net Revenue field onto their visualization. The BI tool sends this high-level, logical request to the semantic layer. The semantic layer intercepts the request, dynamically translates it into the complex physical SQL required to execute the calculation, retrieves the data from the lakehouse, and returns the unified result to the BI tool. If a user in Power BI makes the exact same request, the semantic layer executes the exact same underlying logic. By centralizing the metric definitions, the semantic layer absolutely guarantees that every dashboard, report, and machine learning model across the entire enterprise reports the exact same numbers, eradicating the “multiple versions of the truth” anti-pattern permanently.

The architectural brilliance of the semantic layer lies in its ability to enforce a strict separation of concerns between physical infrastructure, logical definitions, and presentation interfaces. This decoupling provides organizations with unprecedented agility, preventing vendor lock-in and insulating end-users from the disruptive realities of backend infrastructure migrations.

Reverting BI to the Presentation Layer

Prior to the semantic layer, Business Intelligence platforms like Tableau, Looker, and Power BI were forced to act as dual-purpose engines. They were responsible for rendering beautiful visual dashboards (their intended purpose), but they were also heavily burdened with storing massive amounts of proprietary business logic, complex data modeling, and caching mechanisms. This resulted in extreme vendor lock-in. Once an organization embedded ten years of complex financial logic into Tableau’s proprietary modeling language, migrating to a competitor like Power BI became a multi-million-dollar engineering nightmare, as none of the logic could be exported or reused.

The semantic layer strips this computational burden away from the BI tools. By pulling all data modeling, join logic, and metric definitions down into the centralized layer, BI platforms revert to being purely presentation layers. They become thin, interchangeable panes of glass. Because the logic is maintained in a vendor-neutral semantic engine, an organization can instantly swap out their BI tool, or run multiple different BI tools simultaneously, without having to rewrite a single line of business logic.

Insulating Against Infrastructure Churn

Conversely, the semantic layer insulates the business from the constant churn of backend physical storage. Data engineering is a rapidly evolving field. An organization might currently store their data in a proprietary cloud data warehouse, but may decide next year to migrate to an open data lakehouse powered by Apache Iceberg and Amazon S3 to reduce compute costs.

In a legacy architecture without a semantic layer, moving the physical data to a new database means breaking every single BI dashboard in the company, as their hard-coded connection strings and SQL syntax are tied directly to the old database schema.

With a semantic layer in place, the migration is entirely invisible to the business. The data engineering team simply repoints the backend connections of the semantic layer to the new Iceberg tables on S3. The logical virtual datasets, metric names, and API endpoints exposed to the BI tools remain completely unchanged. The business analysts come to work the next morning, refresh their dashboards, and receive their data seamlessly, completely unaware that the massive physical infrastructure beneath them was entirely replaced overnight. This profound decoupling allows engineering teams to continuously modernize the backend without ever disrupting the business.

Virtual Datasets vs Physical Materializations

To implement a semantic layer effectively, data architects must decide how the logical abstractions will actually execute against the underlying physical data. Historically, the industry relied heavily on physical materializations (often referred to as OLAP cubes or materialized views) to deliver fast BI performance. However, modern architectures strongly favor virtual datasets. Understanding the technical distinction between these two approaches is critical for building a scalable semantic layer.

The Cost of Physical Materializations

In older semantic architectures, data engineers used complex ETL (Extract, Transform, Load) pipelines to physically pre-calculate and store the semantic metrics. For example, they would run a massive batch job overnight to calculate the exact revenue for every region, product, and salesperson, and write those results into a new, physical “cube” or table in the data warehouse. When the BI tool queried the semantic layer, it was simply reading this highly optimized, pre-calculated physical table.

While physical materialization delivers incredibly fast query performance, it is inherently brittle and expensive. It requires maintaining massive, duplicated copies of data, driving up storage costs. Furthermore, physical cubes are inflexible. If an analyst suddenly needs to drill down into a metric by a dimension that the data engineer did not foresee (e.g., analyzing revenue by the customer’s zip code instead of their state), the pre-calculated physical cube will fail to answer the query. The analyst must file an IT ticket, and the engineer must rewrite the ETL pipeline to include the new dimension, a process that can take weeks.

The Agility of Virtual Datasets

Modern semantic layers operate almost entirely via Virtual Datasets. A virtual dataset is essentially a saved SQL query or logical definition; it contains absolutely no physical data itself. It acts purely as a real-time translation lens.

When a user queries a virtual dataset in a modern semantic layer, the engine parses the incoming request, dynamically injects the complex business logic (the joins, filters, and metric calculations) defined within the virtual dataset, and generates a massive, highly optimized physical execution plan. It then pushes that execution plan down to the underlying data lakehouse, processes the raw data on the fly, and returns the result.

Virtual datasets provide infinite agility. Because the data is not locked into rigid, pre-calculated cubes, analysts have complete dimensional freedom. They can slice, dice, and drill down into the metrics across any available dimension without requiring IT intervention. Furthermore, virtual datasets consume zero physical storage space, drastically reducing infrastructure costs and entirely eliminating the need for complex, fragile ETL synchronization pipelines. The challenge of virtual datasets, however, is that executing massive aggregations against raw storage in real-time requires an incredibly powerful, specialized compute engine to guarantee sub-second performance.

The Dremio Semantic Layer and Zero-ETL

Dremio recognized that the traditional approach of separating the semantic layer from the core compute engine created unnecessary network bottlenecks and degraded performance. To resolve this, Dremio was architected as a unified platform where a world-class, Arrow-native distributed SQL engine is natively fused with an enterprise-grade Semantic Layer.

Spaces, Folders, and Virtual Datasets

Within the Dremio Semantic Layer, raw physical data sources (like Amazon S3 buckets containing Iceberg tables, or relational databases like PostgreSQL) are connected and immediately abstracted. Data engineers and analysts interact with this data through an intuitive hierarchy of Spaces and Folders, entirely bypassing the cryptic physical file paths.

Engineers use standard SQL to construct Virtual Datasets (VDS) within these Spaces. A foundational VDS might simply rename columns and cast data types for a raw physical table. A higher-level VDS might join five foundational views together and apply complex financial calculations. Dremio maintains a strict lineage graph of all these virtual datasets, allowing engineers to visually trace exactly which physical tables impact which downstream BI dashboards, a critical capability for governance and impact analysis.

Achieving Sub-Second Performance with Data Reflections

The ultimate test of a virtual semantic layer is performance. If a user queries a Virtual Dataset that joins massive tables and performs heavy aggregations, processing that query in real-time against raw object storage can take minutes, unacceptable for interactive BI.

Dremio solves this through Data Reflections. Data Reflections operate entirely behind the scenes of the Semantic Layer. They are intelligent, physical materializations of the data (stored as optimized Parquet files) that Dremio manages automatically. When an engineer enables a Data Reflection on a specific Virtual Dataset, Dremio pre-computes the expensive joins and aggregations.

Crucially, Data Reflections do not break the virtual abstraction. End users continue to query the logical Virtual Datasets exactly as before; they are completely unaware that Reflections exist. When the Dremio query optimizer receives a request, it algebraically evaluates the available Reflections. If a Reflection can satisfy the query faster than scanning the raw files, the optimizer seamlessly rewrites the query plan to read from the Reflection instead. This delivers the sub-second performance of a physical OLAP cube while retaining the absolute agility, dimensional freedom, and centralized governance of a purely virtual semantic layer.

Enabling AI and Autonomous Agents

While the semantic layer was originally conceived to stabilize Business Intelligence reporting for human analysts, it has rapidly emerged as the most critical architectural prerequisite for the deployment of generative AI and autonomous agents within the enterprise.

The Hallucination Problem on Raw Data

Large Language Models (LLMs) are highly proficient at generating SQL, but they lack inherent business context. If an organization exposes a raw, physical data lakehouse directly to an AI agent, the agent is almost guaranteed to fail. The agent will encounter thousands of cryptically named tables lacking primary key definitions, undocumented foreign key relationships, and contradictory column names. When asked to determine “Net Revenue,” the agent will guess the join logic and hallucinate the metric formula, delivering a highly confident but entirely incorrect answer to the user.

The Universal AI Interface

The semantic layer provides the exact structured context that AI agents desperately need. Instead of pointing the LLM at the raw physical storage, organizations point the AI agent at the semantic layer.

The semantic layer presents the AI with a clean, heavily curated list of Virtual Datasets with natural language column names and pre-defined join relationships. The complex business logic (the definition of “Net Revenue”) has already been applied by the data engineering team within the Virtual Dataset. The AI agent no longer needs to deduce complex formulas or navigate physical file structures; it simply executes SELECT net_revenue FROM business_metrics.sales_summary.

By constraining the AI agent’s environment to the governed, pre-calculated logic of the semantic layer, organizations completely eliminate the risk of metric hallucinations. The semantic layer guarantees that the autonomous agent uses the exact same definition of “Net Revenue” as the human CEO reviewing their morning dashboard.

Conclusion

The semantic layer is the definitive translation engine of the modern data architecture. By decoupling physical infrastructure from logical business definitions, it eradicates the chaos of fragmented reporting, insulates the business from technological churn, and prevents vendor lock-in. When deployed natively within a high-performance execution engine like Dremio, it delivers the speed of traditional data warehouses without the cost and rigidity of physical cubes. Ultimately, the semantic layer transforms raw, chaotic data infrastructure into a unified, governed, and highly accessible data fabric, providing the indispensable foundation for both enterprise Business Intelligence and the future of Agentic AI.

Learn More

To dive deeper into these architectures and master the modern data ecosystem, check out the comprehensive books by Alex Merced available in our Books section.